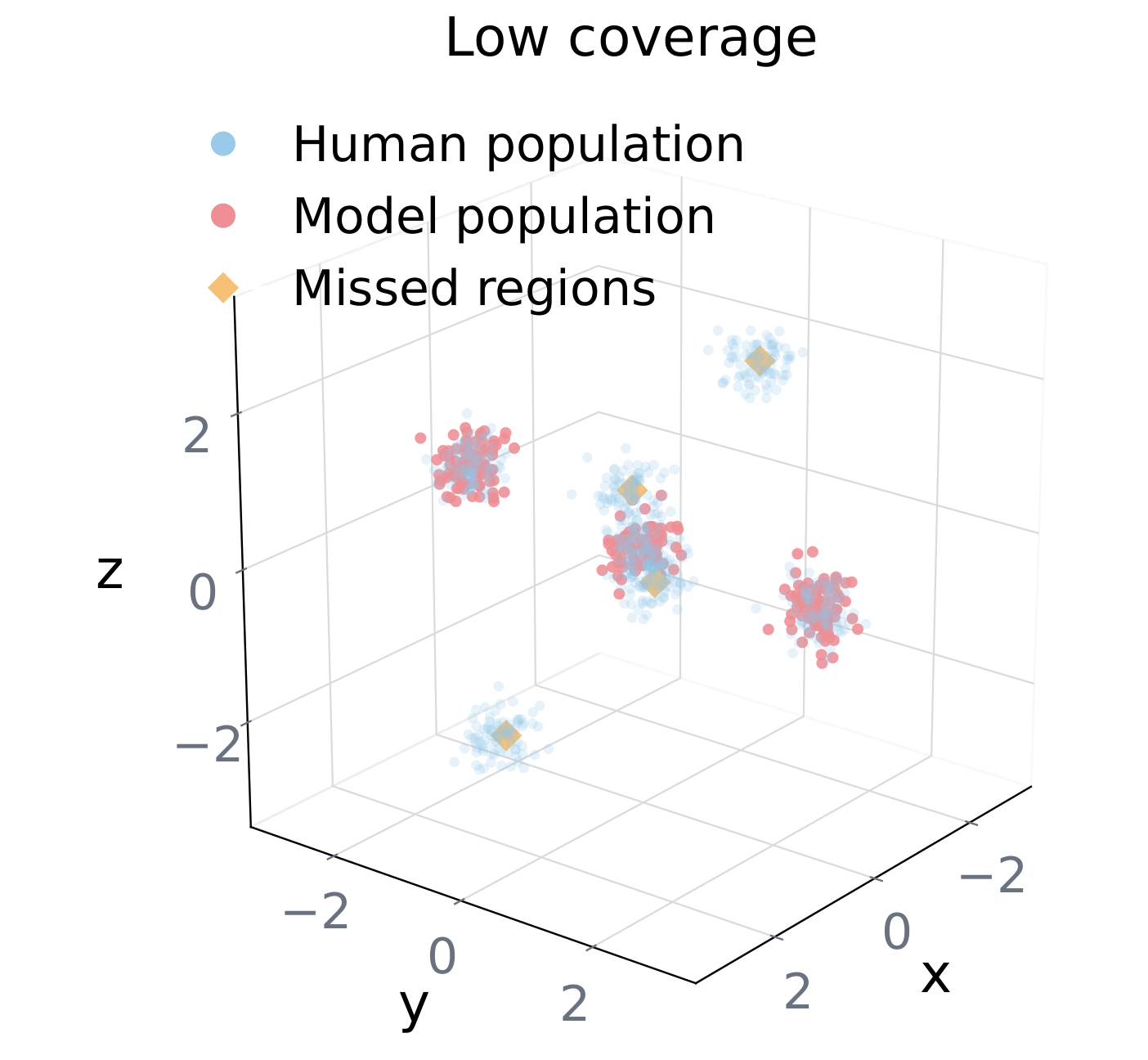

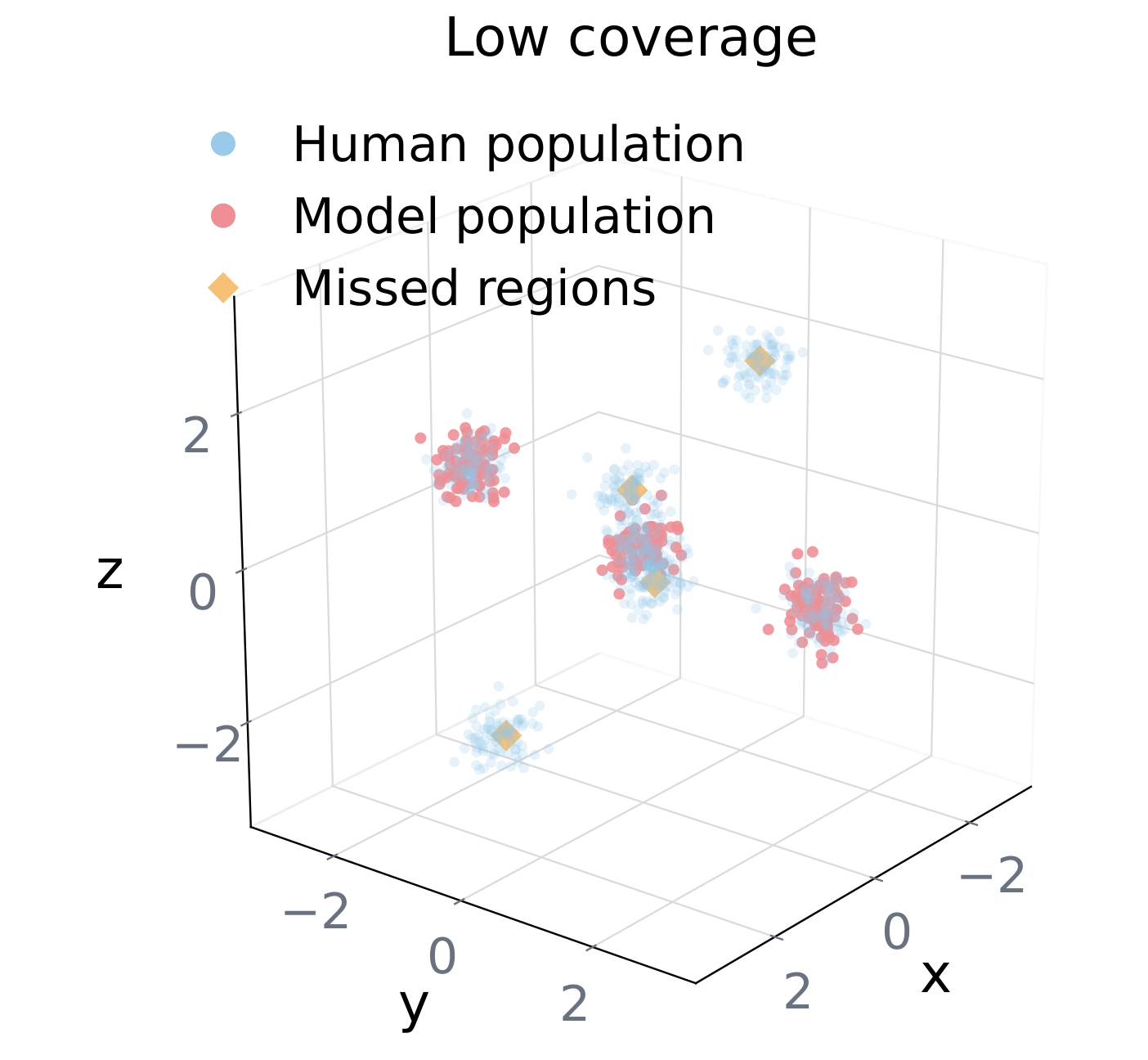

Coverage

Do agents span the full human space?

Fraction of human reference neighborhoods reached by at least one model-generated persona [?]. Low coverage means the model over-samples a modal region and neglects the tails.

1CMU 2UChicago 3MIT 42077.ai 5UTokyo 6RIKEN AIP 7JHU

LLM agents are increasingly deployed as participants in simulated societies [?], synthetic survey respondents, and proxies for user testing [?]. These applications rest on a critical assumption: given a persona with 20+ attributes (age, gender, nationality, religion, political leaning, occupation…), the model will act like an individual whose behavior reflects the complex intersection of all those attributes.

We find this is systematically false. When instructed to role-play a persona with 26 dimensions, LLMs retain only a handful of stereotypically salient attributes and silently discard the rest. A population of supposedly distinct agents degenerates into a few stereotyped clusters. We call this structural homogenization Persona Collapse.

A failure mode in which agents assigned distinct profiles nonetheless converge into a narrow behavioral mode, producing a homogeneous simulated population — even when each agent individually looks faithful to its prompt.

Existing evaluations miss this. They measure per-agent fidelity in isolation — is this one persona acting plausibly? — and are therefore blind to population-level collapse. You can pass every individual fidelity check and still produce a world in which everybody sounds the same.

Here are six scenarios where humans regularly disagree with each other — along political, religious, racial, or identity lines. Given a thousand personas spanning every corner of the demographic space, do the agents disagree too?

Source: moral-reasoning responses from ten LLMs on the Liu et al. 2025 dilemma dataset [?]. Each scenario is posed to 1,144 persona-prompted agents on a 1–5 Likert scale (1 = strongly favor A, 5 = strongly favor B).

We represent a simulated population as a Behavioral Trait Matrix B ∈ ℝN×D, where each row is one persona's response across D behavioral items. A structurally healthy population should satisfy three independent criteria — failing any one of them signals collapse.

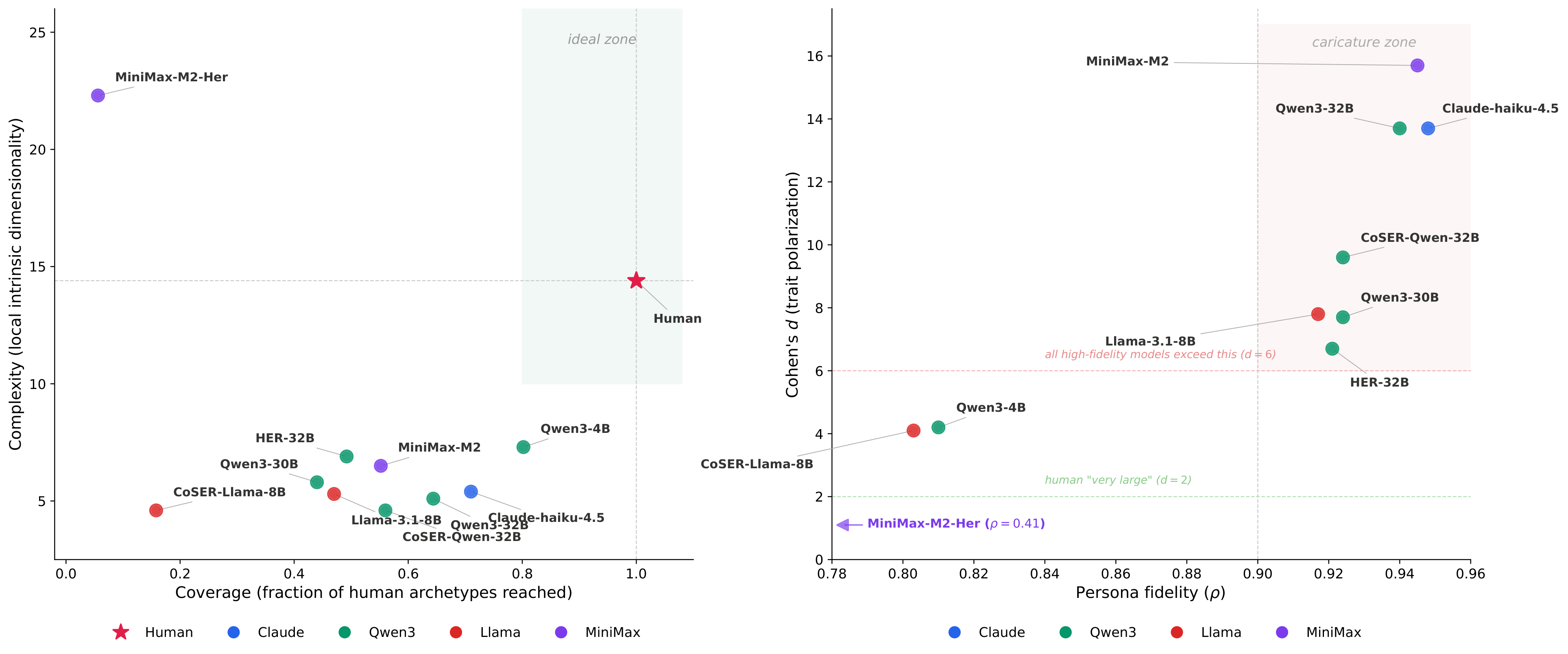

Do agents span the full human space?

Fraction of human reference neighborhoods reached by at least one model-generated persona [?]. Low coverage means the model over-samples a modal region and neglects the tails.

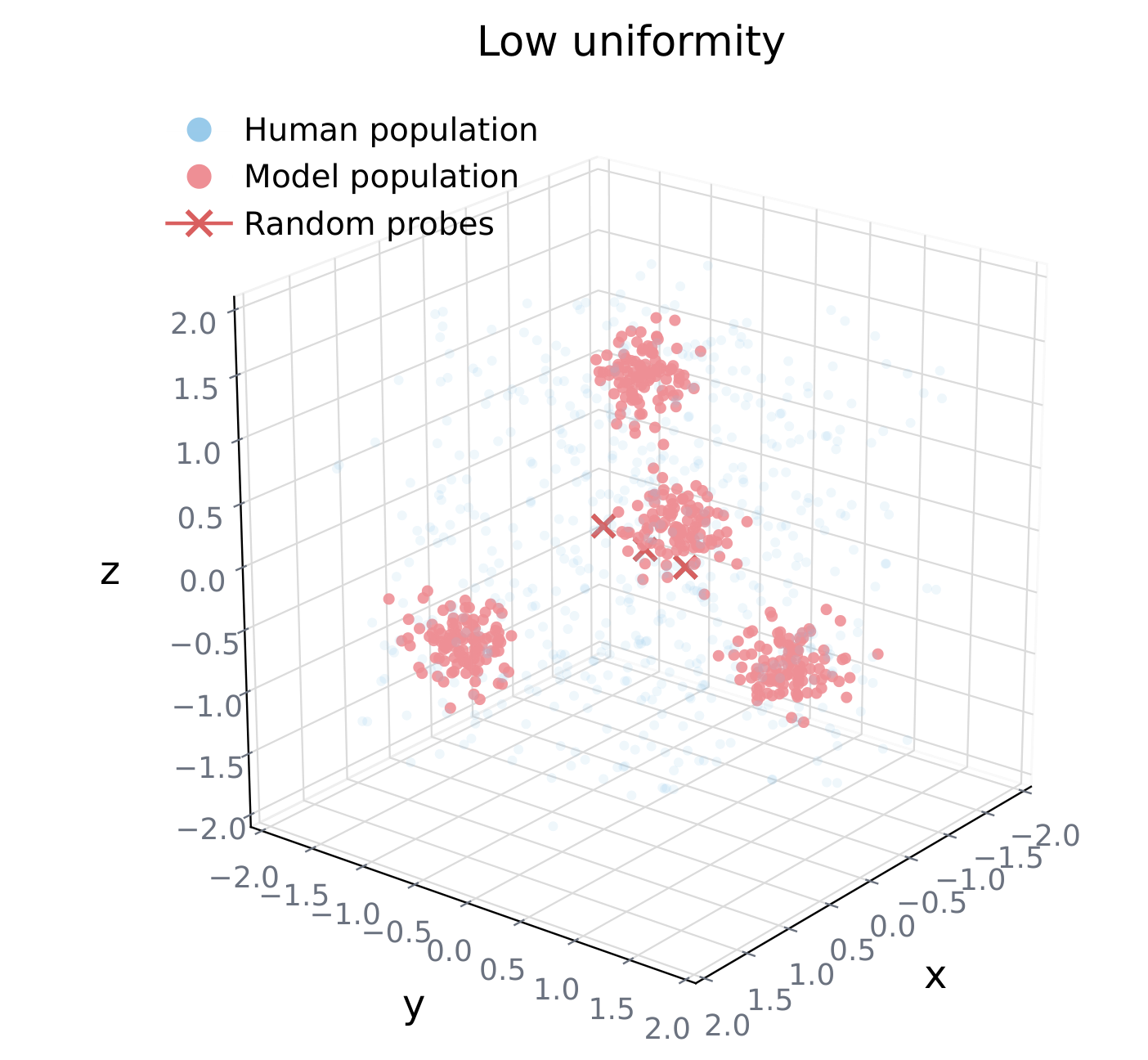

Do agents spread evenly across that space?

Hopkins statistic [?]: compares nearest-neighbor distances from data vs. random probes. Humans sit at H ≈ 0.5. Models either clump (H → 1) or grid-lock (H → 0).

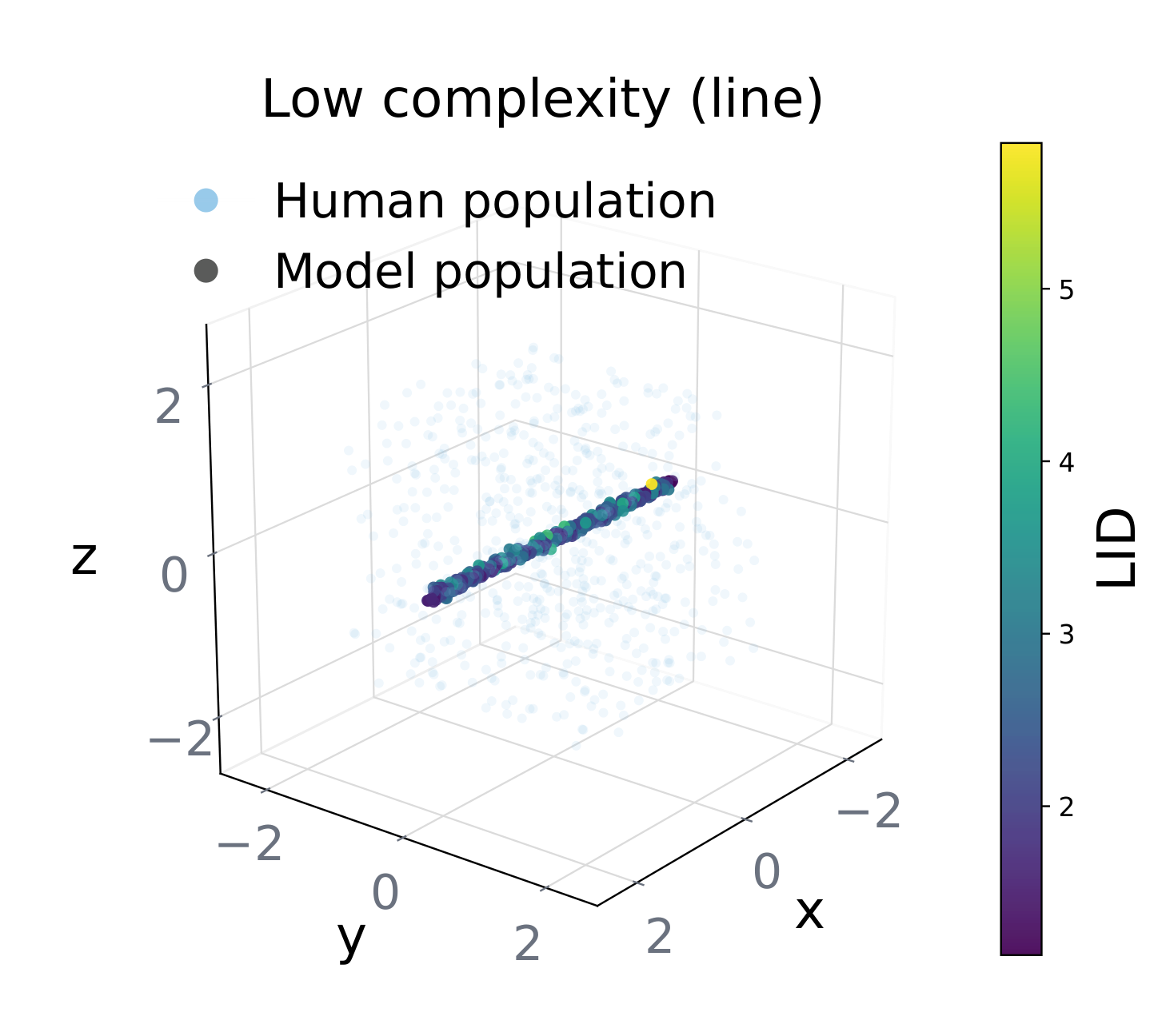

Is the variation genuinely high-dimensional?

Local Intrinsic Dimensionality [?]: imagine 2,000 points along a line in a 44-D room. Coverage and Uniformity both look great — but intrinsic dimension is 1. All "diversity" is motion along one axis.

These three can independently fail. A model can look diverse on one axis and be degenerate on another — or in one task and not another. To localize where simulation breaks, we pair these with item-level diagnostics: Effective Likert range, Tucker's ϕ [?], variance decomposition, η2, and incremental R2.

Demographics, psychographics, individualized traits. 2,000 combinations sampled; 856 filtered for inconsistency → 1,144 personas retained.

Grouped into general-purpose and role-play tracks, enabling controlled comparisons of SFT / RL effects.

The human reference sits at Coverage = 1.0, LID = 14.4. Every model lives in one of three failure modes.

A persona with 26 attributes must be compressed into behavior. Not all attributes make it through. Across every model, the same hierarchy emerges in free-text self-introductions — and once we know the ranking, we know what will be erased.

No model mentions social class in more than 43% of introductions. In LLM-based social simulations, socioeconomic diversity is at high risk of being systematically underrepresented across current models — a subtle, structural bias that shallow-fidelity evaluations cannot see.

The mechanism is trivial: the easiest way to ensure "High Extraversion" personas rank above "Low Extraversion" personas is to push both to opposite extremes. Fidelity, measured in isolation, is misleading — high ρ can simply mean better caricature manufacturing.

Collapse is multidimensional: models can look diverse on one axis and be structurally degenerate on another.

Collapse is domain-contingent: the same model can be the most collapsed in personality and the most diverse in moral reasoning. Certifying a model "diverse" from a single benchmark is misleading.

Collapse is entangled with stereotyping: variation tracks coarse demographic categories rather than individual differences.

The models with the highest per-persona fidelity produce the most caricatured populations. Fine-tuning for role-play can amplify the problem.

Persona collapse lives in the weights, not the reasoning chain. Thinking / non-thinking modes of the same model produce identical item-level collapse.

Future work should reward within-group variance, not only prototype matching.

@article{xiao2026collapse,

title={The Chameleon's Limit: Investigating Persona Collapse and Homogenization in Large Language Models},

author={Xiao, Yunze and Zhang, Vivienne and Yang, Chenghao and Ma, Ningshan and Xuan, Weihao and Huang, Jen-tse},

journal={arXiv preprint},

year={2026}

}References are shown as clickable [?] markers throughout the page. Click any marker, or browse the full list below.